Doing the same with a procedural language requires an intermediate update to be sure that there is no duplicate at any time. And this kind of thing is the reason why I like SQL.

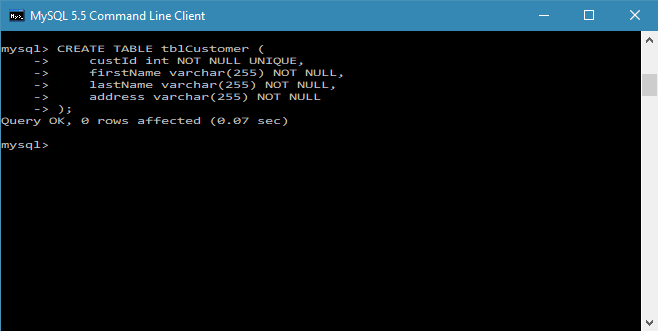

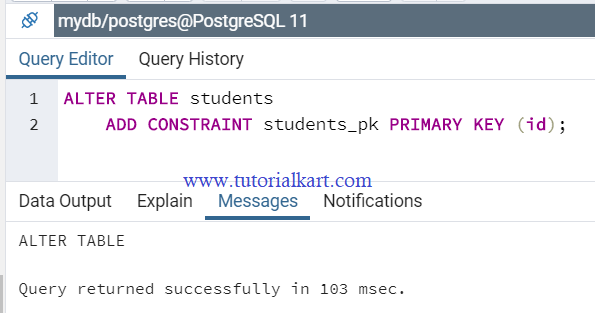

I compared it with the oldest version I have on my laptop (Oracle 7.3) to show that it is something I’ve never seen as a new feature because I started with Oracle 7. So, I compared with Oracle where this statement is always successful, because temporary violations that are resolved later, within the same statement, do not violate the constraint. I see no error at commit, but it simply tells me that it has done a rollback instead of the commit. My second surprise is that the failure of one statement cancels the whole transaction. So my statement failed and this in Postgres seems to fail the whole transaction: In addition to that, the SQL statements should behave as processing the set of rows rather than low-level row-by-row processing.īut there is also a very good thing: because the constraint is validated row by row, you know which value violates the constraint (here: “DETAIL: Key (n)=(1) already exists” ). This violates the Codd rule about physical independence. The bad thing is that the behavior of the application depends on the physical order of the rows and the order where they are processed. And by default, Postgres fails:ĮRROR: duplicate key value violates unique constraint "demo_pk" However, when we increase the value, we have a duplicate value until we process the next row. Decreasing the value doesn’t violate the uniqueness at any time because it reads rows from the beginning to the end. I’ve inserted the rows in ascending order of n. Here is the initial example with updating all rows:Ĭreate table demo as select generate_series n from generate_series(1,2) Īlter table demo add constraint demo_pk primary key(n) But at the end of the statement, the constraint is still valid. The unique constraint just ensures that we have only distinct values so that a select … order by will always return the values in the same order.Īll similar cases have the same consequence: when you process row by row the update, the uniqueness may be violated. When the user wants to move up one item, we just run an update on two rows, exchanging their numbers. A real-life example is a list of items, having probably a surrogate key as the primary key, and a unique key including an item number. It makes sense to use a primary key for that as it is unique and not null.Īctually, a better case would be a simple unique constraint where we just exchange two rows. The value must be known from the first insert (which means the columns are declared not null) and the value is never updated. For each table we need a key that is immutable to identify rows for referential integrity constraints, or for replication. I think I’ve never designed in real life a primary key that has to be updated later. But I’ve also a big admiration for what the Postgres community is doing. I have a big admiration for the Oracle design and architecture choices. And there’s no judgment behind this surprise: I can’t compare a software I use for more than 20 years with one I’m just learning. The goal of the tweet was exactly what I said above: show my surprise, using Oracle 7 as a reference because this is the version where I started to learn SQL. I recently posted a tweet about one of them, comparing latest Postgres version to earliest Oracle version I have on my laptop. While preparing it, I came upon some surprises because I’m so used to Oracle that I take some behavior as granted for any SQL databases. Oracle access paths because I know Oracle and I learn Postgres.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed